Bare Metal Recovery (BMR): Fast, Complete System Restore with Catalogic DPX

When disaster strikes—whether it’s ransomware, hardware failure, or human error—businesses need a way to recover entire systems, not just files. This is where Bare Metal Recovery (BMR) comes in. Unlike traditional restore methods that bring back only selected files or applications, BMR can rebuild an entire system environment from scratch, restoring not just the data but also the operating system, applications, and configurations.

In this blog, we’ll dive deep into the definition of BMR, why it matters, how it works, its benefits and challenges, and its evolution. Finally, we’ll explain how Catalogic DPX delivers BMR to ensure business continuity even in the most demanding environments.

Understanding Bare Metal Recovery

What is Bare Metal Recovery (BMR)?

Bare Metal Recovery (BMR) is the process of restoring a complete system—including the operating system (OS), applications, and data—onto a machine that has no software installed, commonly referred to as a bare machine. The term “bare metal” reflects that the restore is being applied to hardware (or a VM) without any pre-installed OS or middleware.

Unlike traditional backup and restore processes that may require reinstalling the OS and applications before restoring user data, BMR is a one-step process that recreates the entire environment from a single backup image.

Why BMR Matters in Modern IT

In today’s digital environment, downtime is not an option. Gartner estimates that the average cost of IT downtime is $5,600 per minute, which can add up to over $300,000 per hour. Businesses simply cannot afford to spend days rebuilding systems from scratch.

BMR matters because:

- Speed: Entire systems can be recovered in hours instead of days.

- Consistency: Restored systems are identical to their state at backup, reducing configuration drift.

- Ransomware resilience: With immutable backups, BMR can restore clean system images, eliminating reinfection risks.

- Flexibility: Enables system migration to new hardware or virtual machines in case of hardware incompatibility.

How BMR Works

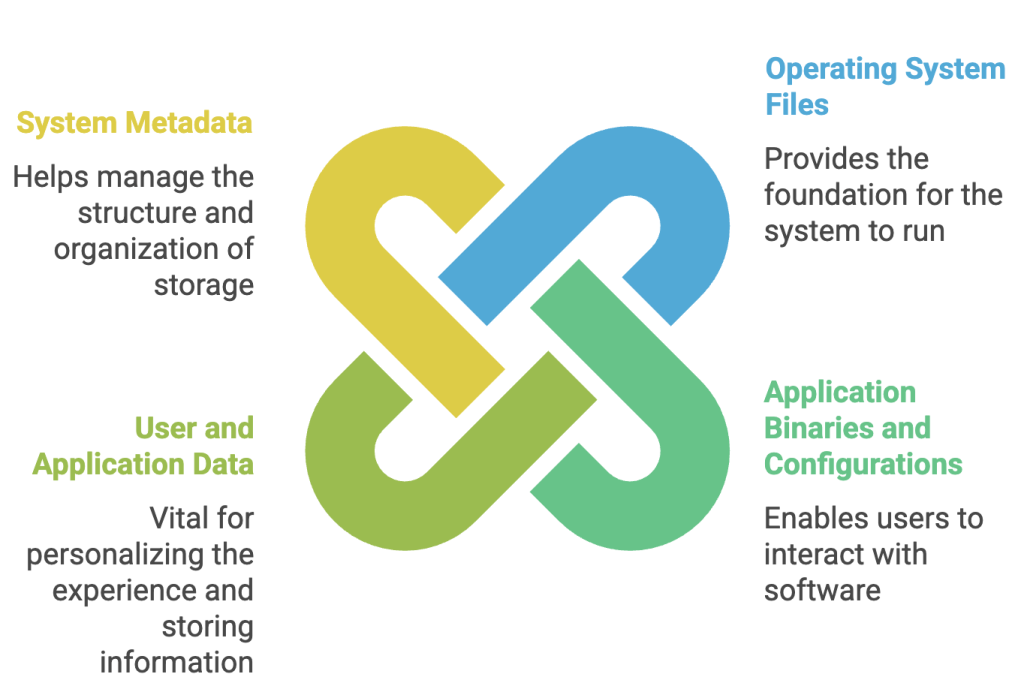

The core principle of BMR is that block-level backups (or system images) are captured and stored. These backups include:

- Operating system files

- Application binaries and configurations

- User and application data

- System metadata (boot records, partition tables, etc.)

The recovery process generally follows these steps:

The recovery process generally follows these steps:

- Boot media preparation: A bootable ISO, USB, or CD is created by the backup software.

- Bare machine preparation: A new or wiped server is set up with no OS.

- Boot from recovery media: The machine boots into a recovery environment provided by the backup vendor.

- Connect to backup repository: Credentials are provided to locate and access the stored backup.

- Select snapshot: Choose the point-in-time backup for recovery.

- Restore system image: The full backup image is written onto the bare machine.

- Reboot: Once complete, the system boots into the restored environment.

Use Cases of BMR

- Disaster Recovery: After a hardware failure, cyberattack, or natural disaster, BMR restores systems to an operational state quickly.

- Hardware Refresh: Migrating an old server to new hardware without manual reinstallation.

- Virtualization and Cloud Migration: Restoring physical server images into VMs or cloud instances.

- Lab/Test Environments: Quickly spinning up replicas of production systems for testing or training.

- Industry-Specific Physical Systems: In industries like manufacturing, energy, or healthcare, downtime can halt production lines or critical processes. BMR ensures fast restoration without reinstalling specialized software.

Advantages of BMR

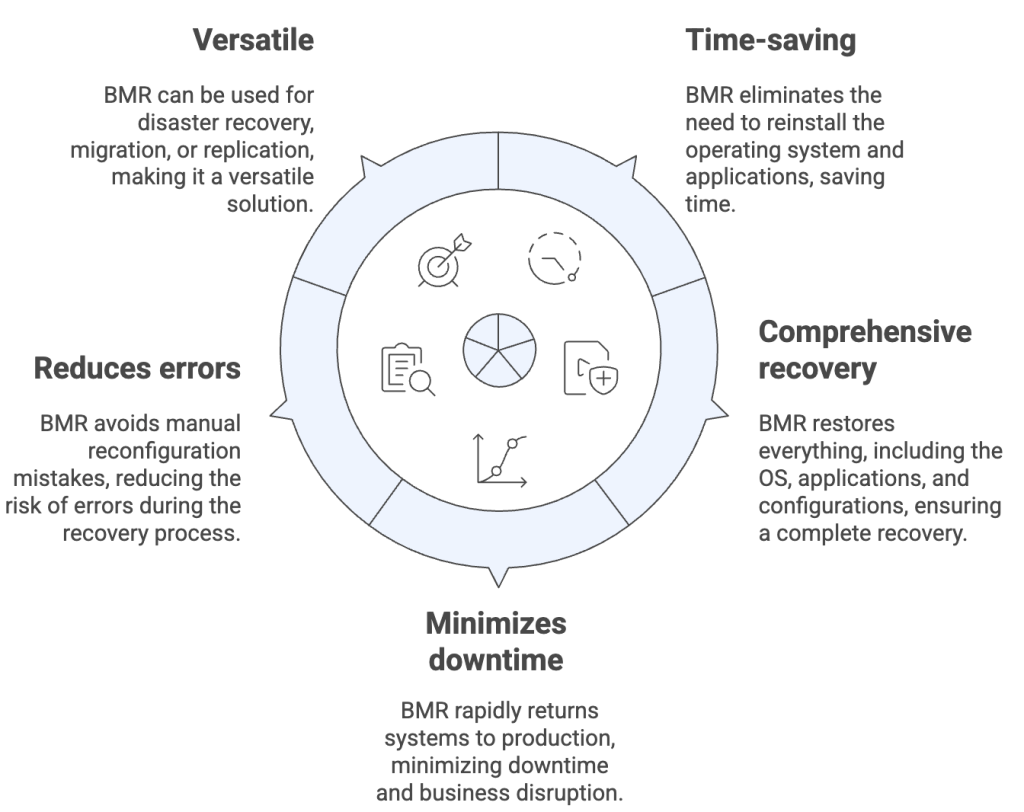

- Time-saving: Eliminates OS and application reinstallation steps.

- Comprehensive recovery: Restores everything from the OS to applications and configurations.

- Minimizes downtime: Rapidly returns systems to production.

- Reduces errors: Avoids manual reconfiguration mistakes.

- Versatile: Can be used for disaster recovery, migration, or replication.

Limitations and Challenges

Limitations and Challenges

- Hardware dependency: Restores usually require hardware similar to the original machine.

- Storage needs: Block-level images are larger than file-level backups.

- Network speed: Large system images can take longer to transfer.

- Complexity: Cross-platform BMR may require additional tools or drivers.

- Licensing and compliance: Some applications may need license reactivation after recovery.

Evolution of BMR

- Virtual Bare Metal Recovery (vBMR): Restoring images into virtual machines for flexibility.

- Cloud-based BMR: Restoring systems into public cloud infrastructure for DRaaS.

- Immutable BMR: Ensuring system images are stored in immutable repositories.

- Automated Orchestrated Recovery: Integrating BMR into recovery workflows for multiple systems.

How Catalogic DPX Does Bare Metal Recovery

Catalogic DPX offers a robust, disk-based Bare Metal Recovery solution designed for enterprises that need fast, consistent, and secure recovery of entire systems.

Key Features of DPX Bare Metal Recovery

- Block Backup foundation: Captures complete system images, including OS, applications, and data.

- Bootable recovery media: ISO or USB image provided by DPX.

- Simple recovery wizard: Step-by-step guidance with minimal inputs.

- Snapshot-based recovery: Recover to a precise point in time.

- Full system overwrite: Rebuilds OS, system state, and apps in one operation.

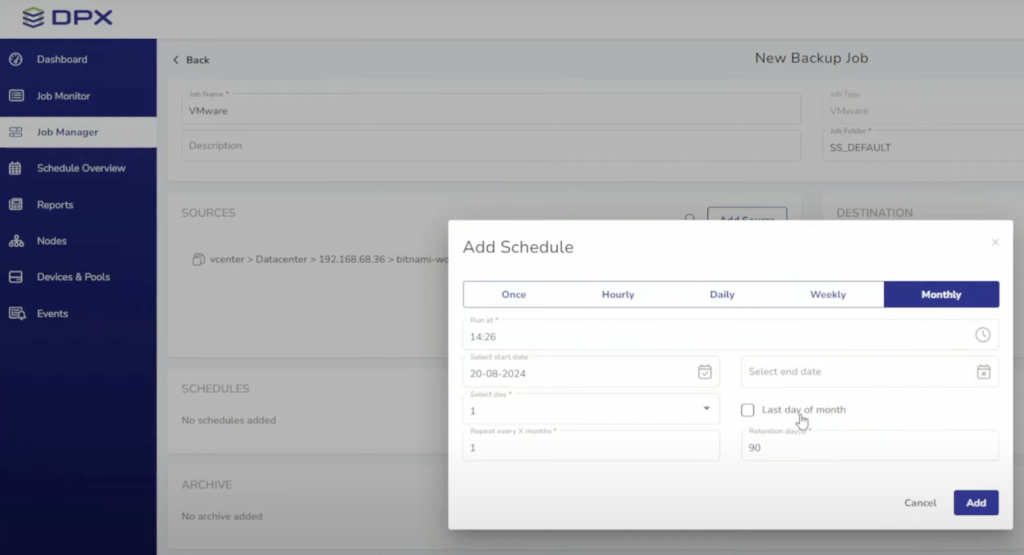

Recovery Workflow with DPX

The recovery process with DPX begins by preparing the bare machine. Ensure the hardware is identical or at least compatible with the original system, and that the disk capacity is at least 10% larger than the source. Next, boot the system into the Bare Metal Recovery (BMR) environment by loading the DPX ISO or USB and launching the recovery wizard. Once in the wizard, authenticate the storage by connecting to your vStor or NetApp repositories. From there, select the appropriate restore job by choosing the correct node, volume, job, and snapshot. After verifying your selections, initiate the restore by confirming any overwrite prompts and starting the recovery process. Once the restore completes, reboot the system—your restored machine is now ready for production use.

Why DPX BMR Stands Out

DPX BMR offers enterprise-grade reliability, built on decades of Catalogic’s expertise in data protection and recovery. It provides deep application awareness, supporting complete recovery of mission-critical workloads such as SQL, Exchange, and Oracle. The solution also delivers flexible storage integration, working seamlessly with both vStor and NetApp repositories. Designed for comprehensive disaster recovery, DPX BMR complements DPX replication and immutability features to ensure business continuity. This makes it especially effective for specialized physical systems, as it restores the full system state—including operating system, applications, and data—onto replacement hardware with ease.

Conclusion

Bare Metal Recovery is no longer a “nice-to-have” but a must-have capability for modern IT and OT environments. Whether restoring a cloud VM, a critical SQL Server, or a specialized industrial workstation, BMR ensures organizations can recover not just their data but their entire operational state.

Catalogic DPX Bare Metal Recovery goes beyond standard BMR by combining block backup technology, application awareness, and flexible storage integration. For enterprises in both IT and industry-specific environments, DPX offers the confidence that when disaster strikes, recovery is not just possible—it’s fast, reliable, and complete.