Enhancing Data Recovery with vStor Snapshot Explorer and GuardMode Scan

vStor Snapshot Explorer: Expanding DPX Capabilities

- Agentless VMware backups

- File system backups

- Application-consistent backups (e.g., SQL Server, Oracle, Exchange)

- Bare Metal Recovery (BMR) snapshots

- Hyper-V backups

- Physical server backups

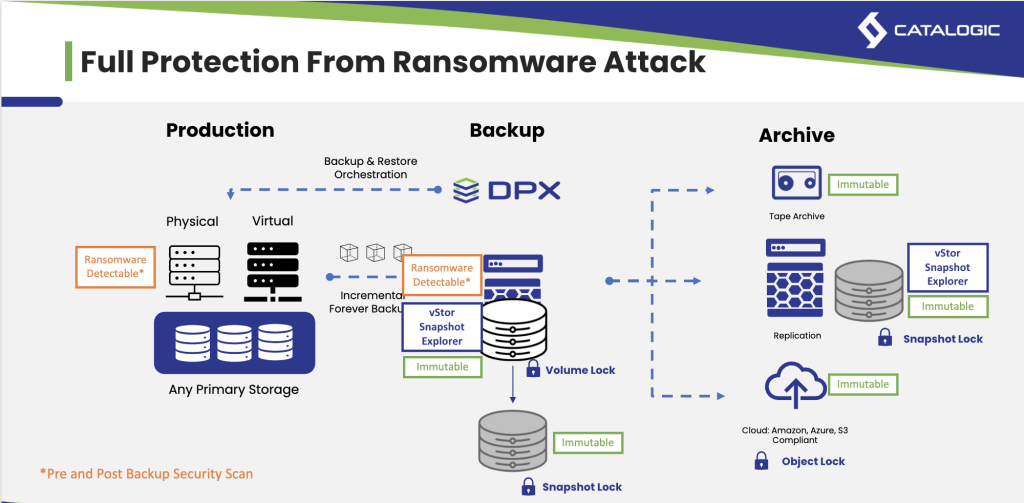

This comprehensive integration enhances the overall functionality of the DPX suite, providing administrators with a unified approach to data recovery across various backup scenarios.

vStor Snapshot Explorer offers a range of powerful capabilities that significantly improve the efficiency and flexibility of data recovery processes. These features work together to provide administrators with a robust toolset for managing and restoring backed-up data:

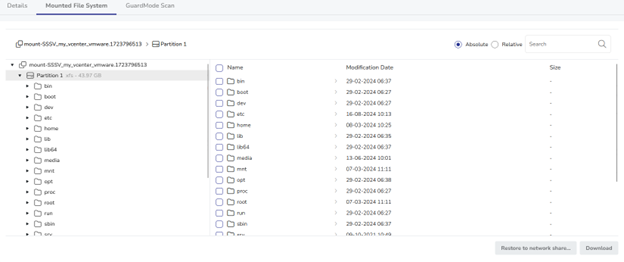

- Direct Mounting: Quickly mount disk images from backups without full restoration, saving time and resources.

- Intuitive Interface: Browse filesystem content easily through the vStor UI, improving efficiency in data exploration and recovery.

- Broad Compatibility: Works with numerous DPX backup types, ensuring versatility across diverse IT environments.

- Granular Recovery: Restore specific files or folders without the need for a full system recovery.

- Network Share Restoration: Directly restore data to network shares, bypassing local storage limitations.

The compatibility of vStor Snapshot Explorer with various DPX backup types ensures that it can be utilized across a wide range of backup scenarios, making it a versatile tool for administrators managing diverse IT environments.

GuardMode Scan: Enhancing Security in Data Exploration and Recovery

GuardMode Scan offers several key functionalities that enhance the security and reliability of the data recovery process:

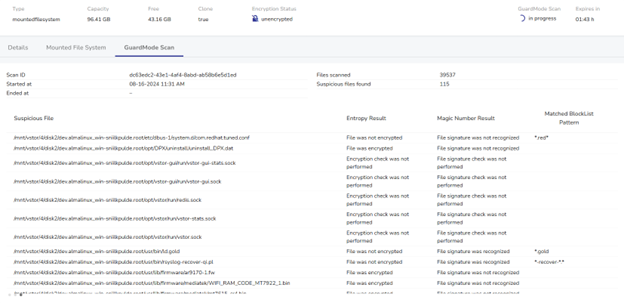

- Automated Scanning: Scans mounted filesystems for potential ransomware infections or data encryption, providing a comprehensive security check before data restoration.

- Real-time Analysis: Displays detected suspicious files as the scan progresses, allowing for immediate assessment and decision-making during the recovery process.

- Comprehensive Reporting: Provides detailed information on suspicious files, including:

– Entropy levels (indicating potential encryption)

– Magic number mismatches (suggesting file type inconsistencies)

– Matches against known malware patterns - Snapshot Timeline Analysis: Enables administrators to scan multiple snapshots chronologically, helping identify the point of infection or data corruption.

- Integration with Recovery Workflow: Seamlessly incorporates security checks into the recovery process, ensuring that only clean data is restored to production environments.

GuardMode Scan not only enhances the security of the data recovery process but also provides several key benefits that address critical concerns in modern data protection strategies:

- Proactive Threat Detection: Identify potential security issues before they impact production systems, reducing the risk of data breaches or ransomware spread.

- Informed Decision Making: Provides administrators with detailed insights into the state of backed-up data, allowing for more informed recovery decisions.

- Compliance Support: Helps organizations meet regulatory requirements by ensuring the integrity and security of recovered data.

- Reduced Recovery Time: By identifying clean snapshots quickly, GuardMode Scan can significantly reduce the time spent on trial-and-error recovery attempts.

- Enhanced Confidence in Backups: Regular scanning of backup snapshots ensures that the organization’s data protection strategy is effective against evolving threats.

By incorporating GuardMode Scan into the recovery workflow, administrators can confidently restore data, knowing that potential threats have been identified and mitigated. This integration of security and recovery processes represents a significant advancement in data protection strategies, addressing the growing concern of malware persistence in backup data.

Practical Applications of vStor Snapshot Explorer

- Granular File Recovery: An administrator needs to recover a single critical file from a 2TB VM backup. Instead of restoring the entire VM, they can mount the backup using vStor Snapshot Explorer, browse to the specific file, and restore it directly. This process reduces recovery time from hours to minutes.

- Data Validation Before Full Restore: Before performing a full restore of a production database, an administrator mounts the backup snapshot and uses GuardMode Scan to verify the integrity of the data. This step ensures that no corrupted or potentially infected data is introduced into the production environment.

- Audit Compliance: During an audit, an organization needs to provide historical financial data from a specific date. Using vStor Snapshot Explorer, the IT team can quickly mount a point-in-time backup, locate the required files, and provide them to auditors without disrupting current systems.

- Testing and Development: Development teams require a copy of production data for testing. Instead of creating a full clone, administrators can use vStor Snapshot Explorer to mount a backup snapshot, allowing developers to access necessary data without impacting storage resources or compromising production systems.

- Ransomware Recovery: After a ransomware attack, the IT team uses vStor Snapshot Explorer to mount multiple snapshots from different points in time. By utilizing GuardMode Scan on these snapshots, they can identify the most recent clean backup, minimizing data loss while ensuring a malware-free recovery.

Optimizing Recovery Strategies with vStor Snapshot Explorer

- Reduced Recovery Time Objectives (RTOs): By allowing direct mounting and browsing of backup snapshots, vStor Snapshot Explorer significantly reduces the time needed to access and restore critical data. This capability helps organizations meet more aggressive RTOs without the need for costly always-on replication solutions.

- Improved Recovery Point Objectives (RPOs): The ability to quickly scan and verify the integrity of multiple snapshots allows organizations to confidently maintain more frequent backup points. This flexibility supports tighter RPOs, minimizing potential data loss in recovery scenarios.

- Enhanced Data Governance: vStor Snapshot Explorer’s browsing capabilities, combined with GuardMode Scan, provide improved visibility into backed-up data. This enhanced oversight supports better data governance practices, helping organizations maintain compliance with data protection regulations.

- Streamlined Backup Testing: Regular mounting and verification of backup snapshots become more feasible with vStor Snapshot Explorer, encouraging more frequent and thorough backup testing. This practice enhances overall backup reliability and readiness for recovery scenarios.

- Efficient Storage Utilization: By enabling granular file recovery and snapshot browsing without full restoration, vStor Snapshot Explorer helps organizations optimize storage usage in recovery scenarios, potentially reducing the need for extensive recovery storage infrastructure.

Elevating Your Data Protection Strategy with vStor Snapshot Explorer

Ready to enhance your data recovery capabilities? Contact our sales team today to learn how these tools can augment your existing data protection suite and provide greater control over your backup and recovery processes.